justinkendra - Fotolia

Compare Docker containers vs. VMs for app dev environments

While there are important technical distinctions between VMs and containers, the reasons developers opt for the latter are often more philosophical, or cultural, in nature.

So, a software engineer installs Docker and is blown away with how quickly she was able to provision an instance of the desired stack. Then, the engineer is struck by a thought: "Haven't I done this already with a virtual machine?"

Container software provides a fast way to provision, use and move around applications, but the core concepts around containerization of the stack are not new. The trusty VM can technically achieve all the same things that containers can, including compartmentalization of entire stacks, portability and full-stack deployments.

The reason VMs were not as widely adopted by developers could be an artifact of how those VMs were delivered to them vs. how they actually function. This means the preference for containers over VMs could both be philosophical and technical in nature.

Technology comparison

To understand the differences between Docker containers vs. VMs, let's start with the technical aspects.

- Hypervisor: The hypervisor requirements for VMs are large and inevitably tied to where the hypervisor is installed and its version. There are plenty of conversion tools, but the conversion process has to happen before you can move from one hypervisor to another. Meanwhile, containers are hypervisor-independent but do have some dependencies. For example, a Docker container still needs Docker; it won't necessarily run on any container engine.

- Back-end support: VMs provide broader support for the back end of applications. It is not very practical to create Docker containers for your back end, nor does it really fit with the principles of containers, which should have relatively short lifespans. Ideally, upon every release, all current production containers are destroyed and replaced with new ones.

- Size: Sans the lack of support for back ends, which can bloat any container, containers are substantially smaller. A one-for-one comparison of just front-end application deployments on a Docker container is much smaller than its equivalent VM. This means that provisioning, which requires the copy of the physical image file, is much faster with containers.

- Speed: The difference in speed degradation from the host OS to the container is an important consideration. There is a loss with VMs, while containers arguably have none -- excluding the small overhead for the Docker service. There are more performance advantages with containers vs. VMs. However, this is often a moot point because most Docker instances run on VMs. There may be no loss of speed, but they inherit the degradation of the VM from its bare-metal host. Generally, the best practice is to run on a VM instead of the local machine.

- Host OS: A VM is fully isolated from the host OS, while Docker has isolated memory and disk space -- although containers use the same host OS kernel. Additionally, Docker does not support the breadth of host or container OS options that a VM does. If you are a Microsoft shop, you have more options running Docker on Window Server 2016, but VMs still have more options than containers.

- Networking: VMs have a broader set of networking capabilities. VMs support virtual networks, which can be snapshot, along with a collection of networked VMs. This offers a lot more flexibility but is probably more relevant for desktop environments and less for application development. Containers can also be networked, but the technology is less mature and configuration is more complex.

- Images: VM snapshots and container images are both ways to maintain a library of instances that developers can provision in the future. One VM feature that is not possible with Docker is the ability to suspend and maintain memory state. This can fast-track full-stack deployments and help address application bugs. Docker supports PAUSE and UNPAUSE, but it is still only local to the active instance.

- Management: Both VMs and Docker containers have unique management considerations. Developers need to treat Docker containers like application components: They should version them and store them in a repository. Docker containers are built in layers and have a lot of moving parts, which makes it important to track all changes. VMs also change, but less frequently. Compared to the number of VMs in a typical hypervisor setup, a typical Docker setup will likely have more containers. So, Container sprawl is more likely than VM sprawl.

- Portability: Docker containers are generally more portable than VMs because of size, networking and other elements. While you have some movement with VMs, it is not as seamless as provisioning a new instance of a Docker container anywhere you want without any overhead.

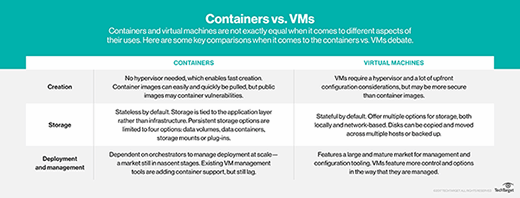

![containers vs. VMs]()

Compare VMs and containers.

Philosophical comparison

While it's difficult to quantify the influence of people on technology adoption, this does not mean there's no influence at all.

Docker was launched as a tool built by developers for developers. It was something that developers understood in their typical development flow and could access much more easily. For example, Docker better supports release automation and full-stack deployments. On the other hand, VMs were introduced at a time when IT had full control of all infrastructure, including development.

In addition, because VMs are tied to a hypervisor and are bigger in size, there is still typically a process to procure them. As a result, they don't support the modern DevOps delivery chain, as well as containers.

Again, though, one relatively obvious and big thing to remember is that Docker container software nearly always runs on a VM. And because VMs are a set-and-forget-it kind of infrastructure, running Docker on them builds more flexibility.

The limitations of VM adoption in development are a result mostly of how organizational structures were set up around VMs. Docker's container software wins on agility, but besides the key differences around size and machine speed, there is nothing that prevents VMs from being a full-blown containerized option for the modern software delivery chain.