The importance of cloud capacity management and how to do it

Running workloads in the cloud gives an organization access to unlimited resources. That's a good thing, but only if the IT team adopts good capacity management practices.

One of the cloud computing model's biggest benefits is that it supports highly flexible and dynamic resource usage. Cloud users consume as many or as few resources as needed, and they have the freedom to adjust their consumption as needs fluctuate.

That does not mean that cloud platforms automatically optimize resource allocation. For most types of cloud services, it's left to the user to determine how many resources cloud workloads will require at any given moment. AWS Aurora is one attempt to solve this problem; it automatically allocates resources based on workload need.

Cloud capacity management is critical to an effective IT strategy. It gives developers, IT teams and DevOps engineers the insights they need to ensure that their workloads have the required resources. At the same time, it lessens the risk that workloads will become overprovisioned in ways that waste money and add unnecessary management overhead.

Why the cloud needs capacity management

Consider a cloud server that hosts several web applications. Proper capacity management ensures that the server runs on a virtual server instance with enough CPU, memory and storage resources to support the applications, but not so many resources that a significant portion goes unused.

This article is part of

What is cloud management? Definition, benefits and guide

Another aspect of capacity management is to determine how many servers to include in a cluster that shares responsibility for hosting an application. In this case, the IT team must be sure to include enough servers to handle the load placed on the application and also keep sufficient backup systems in place to guarantee the application remains available in the event some servers crash.

This balancing act is the key to capacity management. An organization wants to avoid both underprovisioning workloads in such a way that they cannot perform adequately, and overprovisioning them by allocating resources they do not need.

Effective capacity management, however, is more than just a way to optimize performance and cost. It helps to:

Provide insight into long-term IT planning. For example, capacity management can help determine which workloads to move to the cloud. Workloads with fast-changing capacities are ideal candidates for the cloud, where resource allocations can be easily scaled up and down.

Determine which infrastructural and application architectures align with your needs. For instance, if you have a virtual server with routinely fluctuating capacity demands, you might find that serverless functions would be a better way to host that workload. Serverless functions allow you to allocate large amounts of resources for short periods in a more cost-effective and easy-to-manage way than is possible with virtual servers.

Arrange the right people and tools. This is a step beyond your team knowing how many resources to allocate to workloads. It's important to find out if you have the organizational resources necessary to assign those resources. You'll need staff on hand to perform the necessary provisioning, and those workers should have the requisite skills to work with the tools you use to manage resource allocation.

Avoid disruptions to users. Wrong-sized workloads can create problems for the people who expect a specific application to be ready for them when they need it. When your workload capacities are well managed, you minimize your risk of having applications or servers fail.

While it has been a part of IT workflows for decades, capacity management has become especially important since the emergence of cloud computing. This is because scalability is a crucial factor in an organization's decision to migrate to the cloud. To capitalize fully on that scalability, however, IT teams must manage resource utilization effectively and continuously. If they can't, they miss one of the chief advantages of cloud architecture. Such companies might do better to stick with on-premises architectures.

Steps to manage cloud capacity

The nature of cloud architectures and services varies widely, so there is no single or simple way to approach cloud capacity. In general, however, an effective cloud capacity management strategy will involve several key steps.

1. Assess baseline capacity requirements

First, determine how many cloud servers, application instances, databases and so on your team requires on average to maintain adequate performance. You'll need to know how many CPU, memory and storage resources each workload requires -- these are your baseline capacity requirements. It's important to remember that you shouldn't use that baseline to make resource allocations, especially if demands placed on the workloads often fluctuate. Still, knowing your baseline provides a starting point for capacity planning.

2. Assess scalability needs

Once you know the baseline requirements for each workload that you run in the cloud, examine the scalability they'll require. Evaluate how much variation occurs to workload demand between different times of day, days of the week or seasons of the year. Some of your cloud workloads will have higher scalability requirements than others. For instance, a website with a globally dispersed user base probably won't see as much fluctuation in usage in a full day as a website that caters to users in a specific geographic location, which likely will see most demand during that locale's daytime hours. Likewise, a website for a meal-delivery service will probably experience higher load during mealtimes than at other times of day.

3. Make initial resource allocations

For workloads that don't already run in the cloud, you'll need to set initial resource allocations before you start them. Plan to allocate 20% more resources to each workload than the baseline requirements dictate. This provides a healthy buffer in case demand unexpectedly jumps but doesn't unreasonably overprovision your environment.

4. Set up autoscaling policies

Mainstream public cloud providers allow you to create so-called autoscaling policies. With these policies in place, the cloud platform automatically increases or decreases the resource allocations assigned to your workloads based on the traffic thresholds you configure in the policies. You can apply autoscaling policies to most types of cloud workloads, including virtual machine instances, databases, containers and serverless functions. However, certain niche categories of cloud workloads, such as IoT devices, typically can't be managed using autoscaling.

5. Collect and analyze capacity data

Whether or not you configure autoscaling for your workloads, it's important to constantly assess how well the allocations work and adjust accordingly. Consider these metrics and factors:

- How often do your autoscaling policies trigger? If they are rarely applied because your workloads never reach the minimum thresholds for autoscaling, the workloads are likely overprovisioned. It may be time to reconfigure your thresholds.

- How do your actual cloud costs, as reflected in monthly bills, compare to your anticipated costs? Beating cost expectations is one sign that you are managing capacity well; when you find cloud expenses are too high, you could probably do a better job at capacity management.

- How often do you experience disruptions or downtime related to capacity or resource allocation?

- How often does your team intervene manually to correct a capacity issue? You might reduce the need for manual changes with more intensive autoscaling or migrate your workload to a different type of architecture, such as serverless.

- Do the baseline workload requirements and the anticipated scalability needs that you identified for each workload remain consistent with actual performance?

Plan for long-term cloud capacity changes

The strategies above will help you manage cloud capacity on an everyday basis. Additionally, you'll need to plan for long-term capacity needs so that your IT infrastructure evolves appropriately over time to meet changing workload requirements.

Traditionally, long-term capacity management centered on the purchase and deployment process for new servers, storage media and other on-premises data center infrastructure. This is irrelevant in the cloud, where a service provider already has made those investments on a vast scale and offers as much infrastructure as any customer needs.

Instead, long-term capacity management for the cloud should focus on how to evolve your cloud architecture over time in response to changing capacity requirements. If today you use just one cloud, for example, assess your long-term workload expectations and think about whether it might make sense to adopt a multi-cloud strategy to meet future capacity requirements. Or you might decide that the organization's long-term capacity efficiency will be improved with a decision to refactor applications to run as microservices inside containers.

Cloud capacity management tools

Cloud capacity management is a complex, multifaceted process, and there is no single tool that will meet all of your capacity planning needs. A variety of tool types can assist in the process, including:

- Monitoring and log management. Data collected by monitoring and logging tools such as AWS CloudWatch, Azure Monitor and third-party monitoring platforms can help you keep track of performance trends and alert you to changing capacity needs.

- Infrastructure as code. Infrastructure-as-code tools automate infrastructure setup and resource allocation, so it is much easier and faster to reconfigure allocations in response to capacity changes.

- Cost calculators. To manage the financial aspects of capacity planning, the cost-prediction tools that cloud providers offer are useful. They can help evaluate the costs associated with different resource allocations or workload types.

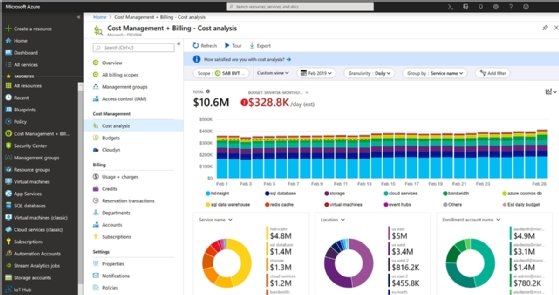

- Right-sizing and cost management. Cloud providers offer tools designed to help predict capacity requirements. AWS has a cost management tool, as does Microsoft Azure. Some third-party application performance management (APM) tools also offer right-sizing features.

Capacity management is important in any IT environment, but it's especially critical if you want to get the most out of cloud environments. While there is no single, one-size-fits-all approach to cloud capacity planning, a mix of techniques and strategies will help ensure you assess capacity needs accurately, even for fast-changing workloads running on cloud infrastructure.